robots.txt - crawler access control

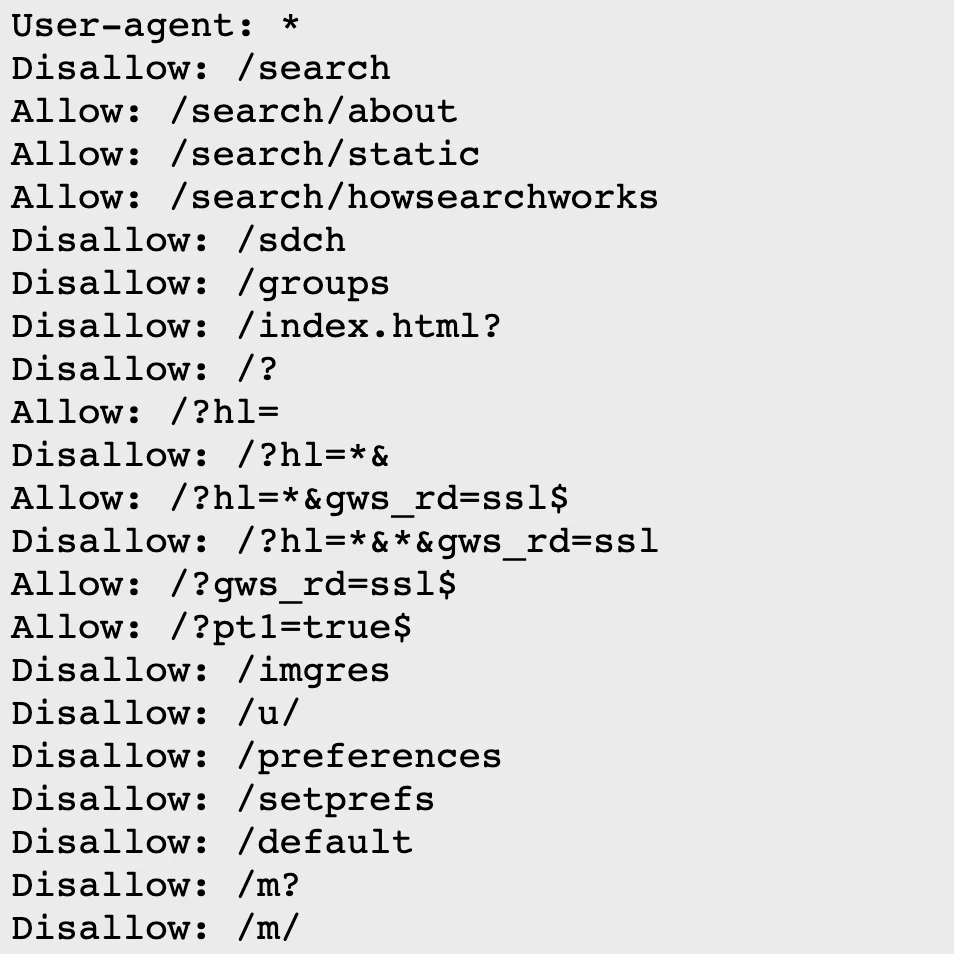

robots.txt is a text file at your site root. Crawlers (Googlebot, Bingbot, etc.) read it first to determine which paths they can access. It became an official internet standard via RFC 9309 in 2022.

User-agent: *

Allow: /

Disallow: /admin/

Sitemap: https://example.com/sitemap.xml

Need to generate one quickly? Use robots.txt Generator.

For detailed syntax and examples, see the robots.txt setup guide.

Why it matters for SEO

Google allocates a crawl budget per site. If crawlers waste time on admin pages or duplicate content, important pages may not get crawled in time. Blocking unnecessary paths with robots.txt lets crawlers focus on what matters.

Important caveats

robots.txt blocks crawling, not indexing. If another site links to a blocked URL, Google can still index the URL itself without seeing the content. Google officially dropped support for the noindex directive in robots.txt in September 2019. To prevent indexing, use meta robots or X-Robots-Tag.

Also, robots.txt is advisory. Legitimate crawlers respect it, but malicious bots ignore it. Don’t use it for security - use server-side authentication instead.

meta robots - per-page index control

meta robots is an HTML tag in <head> that tells crawlers whether to index a page.

<meta name="robots" content="noindex, nofollow">

Key directives:

- noindex - don’t show this page in search results

- nofollow - don’t follow links on this page

- noarchive - don’t show cached version

- max-snippet - limit snippet length

- max-image-preview - limit image preview size (none/standard/large)

Default is index, follow - no need to specify unless you want to restrict.

X-Robots-Tag - HTTP header approach

meta robots only works in HTML. For PDFs, images, or JSON files, use the X-Robots-Tag HTTP response header.

X-Robots-Tag: noindex, nofollow

Same directives as meta robots. Configure in Nginx or Apache for specific file types. You can also target specific crawlers:

X-Robots-Tag: googlebot: noindex

Summary

- robots.txt: controls crawling (“don’t visit this path”)

- meta robots: controls indexing (“don’t show this in search results”)

Blocking crawling with robots.txt while adding noindex is pointless - the crawler can’t see the noindex tag if it can’t access the page. To properly prevent indexing, allow crawling but add noindex.